AI Rewrites the Possibilities of Digital Twin

Developers of the virtual design tool see AI as providing the catalyst for a major shift in product development.

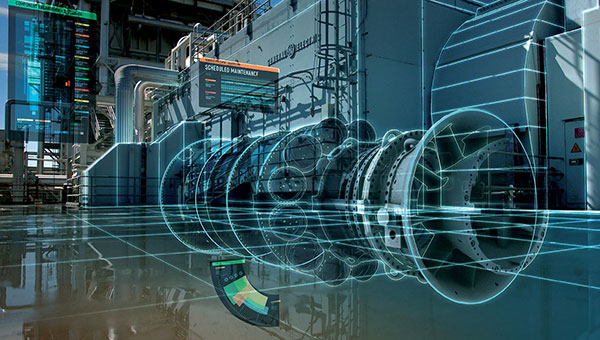

Fig. 1: Engineers can build digital twins of complex physical assets using design, manufacture, inspection, sensor and operational data. The accuracy of the digital twin increases over time as more data refines the AI model. Image courtesy of GE Global Research.

Latest News

January 31, 2020

Digital twin technology promises to transform design and give product developers, manufacturers and businesses a 360-degree view of products and systems throughout the entire lifecycle (Fig. 1). Armed with an enriched pool of data provided by the Internet of Things (IoT), the design technology stands poised to deliver previously impossible opportunities. But, there’s a catch: A key capability is missing from conventional modeling and simulation tools.

For the technology to deliver on its promise, it must be able to run analytics in real time or faster, provide a high degree of prediction accuracy and integrate data from a collection of disparate and often incompatible sources.

Unfortunately, meeting these goals lies beyond the reach of traditional design technologies. To address the new demands, designers are turning to artificial intelligence (AI), the missing element in the engineer’s toolbox.

But even with the current crop of AI technologies, developers and analysts have to perform a number of balancing acts. For instance, they have to find algorithms that can achieve the right balance of speed and accuracy. They must also acknowledge that the size of the data pool sometimes matters less than the quality of the data in it.

Furthermore—and this may make or break the technology’s success—digital twin software providers will have to find a way to reduce implementation demands so that more users can enjoy the benefits of the technology.

What Is AI’s Contribution?

How can AI help to fulfill the promise of the digital twin? Developers of the virtual design tool see AI as providing the catalyst for a major shift in product development.

“With machine learning (ML), we can create models based on observed behavior and historical data rather than just the design information,” says Bhagat Nainani, group vice president of IoT and blockchain application development at Oracle. “We can also use historical asset data and real-time sensor readings from multiple assets to help detect anomalies and make failure predictions, preventing unplanned downtime.”

Technology developers see AI as a way to accelerate design processes, allowing engineers to quickly evaluate many possible design alternatives. By changing design parameters and running AI algorithms, digital twin design software providers contend that engineers could quickly evaluate possible best fits based on the results of the algorithms.

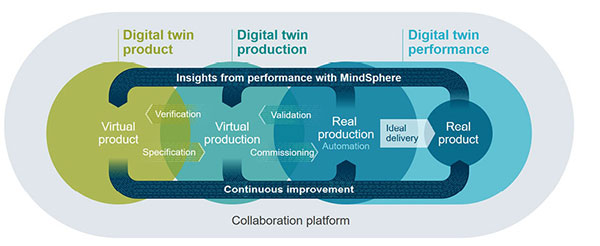

They also claim that designers will be able to run AI solutions on existing designs to uncover properties not considered during the initial design phase and then use the findings to improve the product or system (Fig. 2).

The use of AI in this context is still in the early stages. Most successful digital twin technologies use AI systems to make predictions of situations where data is abundant and where the processes being evaluated are relatively simple. But this is changing.

“We are in the midst of a second wave of digital twin technologies, which has truly game-changing attributes,” says Juan Betts, managing director of Front End Analytics. “In this new framework, the digital twin is not just predicting overall product performance based on user preferences, but it is also adapting and predicting the performance and state of key individual components of the system to achieve user-specific individualized performance enhancements.”

A good way to see how these benefits are delivered is by viewing the various stages of a digital twin implementation. This examination begins with conventional computer design tools and migrates to more cutting-edge AI-based processes.

The typical starting point for implementing one of these virtual designs is with an existing 3D simulation model, created using a product lifecycle management (PLM) platform or CAD tool. These models typically describe what the physical entity will look like and provide dimensions and possibly descriptions of materials to be used in construction.

In Pursuit of Simplicity and Speed

The engineer—or sometimes the analyst—then begins to create a digital twin of the physical product by overlaying the existing 3D simulation model with real-time data from associated sensors, deployment details and operational conditions.

At this point, the engineer encounters a major challenge. Conventional design software often takes hours or even days to complete simulations using the operational and sensory inputs required to create the digital twin. As a result, tasks such as design optimization, design space exploration and what-if analyses become impractical because they simply require too many simulations.

To bypass this obstacle, digital twin developers implement an AI-driven process called surrogate modeling—also known as reduced order modeling—which mimics the behavior of complex simulation models as closely as possible in a less computationally intensive way.

The analyst constructs surrogate models using a data-driven, bottom-up approach, taking the critical aspects of a detailed model and reducing them to simpler algorithms that are executed in real time or faster.

Multiple techniques can come into play here, with the engineer combining computations from online and offline phases and using decomposition methods. The surrogate model can use truncation, subspace and response surface methodologies, neural networks and ensemble-based heuristics.

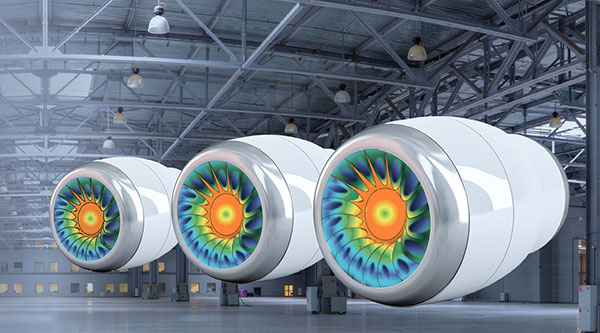

The analyst trains each of the models with one or a combination of data types, including simulation, experimentation, field-test and product-operational data (Fig. 3). Model training calibrates the simulation outputs so that their predictions are more accurate.

These techniques help the engineer to generate a surrogate model, which delivers a number of important advantages.

“Due to that acceleration enabled by surrogate models, development teams can explore many architectural implementations and verify the results in the same amount of time it would have taken for one original simulation run,” says Martin Witte, senior principal key expert, system engineering and simulation at Siemens Digital Industries Software.

The goal is to reduce the computational complexity of the mathematical models while still emulating complex full-order models or processes, capturing essential features of the phenomena, often without calculating all full-order modeling details.

Surrogate models use real-world data to optimize design parameters, predict behavior changes—such as those caused by aging—and update the digital twin accordingly. Engineers can use this approach to sense and interpret the real-world conditions and operating parameters of the physical device and feed their insights back into the digital twin.

After these steps are completed, the engineer links the surrogate models, algorithms and other physical data into a product model. The digital twin is updated based on new real-time data and usage data from deployed assets. To ensure this model doesn’t get stale, engineers execute AI algorithms periodically to update the digital twin operational model.

Digital Twin’s Tower of Babel

A digital twin simulates many different models, representing all aspects of the asset, ranging from CAD and model simulation to flow-dynamics and electrical-circuit simulations. The processes that create digital twins make it crucial that the various data and model formats interact as seamlessly as possible.

“The output of one design analysis is used by other design analysis,” says Achalesh Pandey, technology director for artificial intelligence at GE Research. “The key challenge is in stitching together the various high-fidelity simulation models, and finally, creating stitched reduced-order models [surrogate models] to perform system-level design optimization. The other challenge is to run these compute-intensive simulations in a scalable manner using optimal compute architecture.”

A number of design tools, such as ANSYS’ Twin Builder, claim to support these processes. Engineers, however, also use other technologies. For example, compiling simulations into lightweight runtime modules that can interact with each other is essential.

“We are in the midst of a second wave of digital twin technologies, which has truly game-changing attributes.”

That said, challenges remain. “The ability to combine the models from all the authoring tools in the digital twin flow is limited by the lack of standards,” says Witte. “Even when there are standards, they do not completely specify the semantics required for these tools to all behave the same. For example, functional mockup units for simulation and Step formats for CAD lack the requisite semantic richness.”

When There’s Not Enough Data

Designers and analysts have the greatest success in applying AI in the creation and enhancement of digital twins when they have access to abundant data and when the processes of interest are relatively simple. Unfortunately, these two components do not always exist.

Several data-driven approaches—such as neural networks and radial basis functions—are used to build surrogate models. To create a good-quality model, AI technology requires large quantities of trusted data to train models to accurately identify expected behavior and properties. The catch is that this volume and quality of data are often difficult to acquire and verify.

“The digital twin brings real-world physical experience to the simulation,” says Ed Cuoco, vice president, AI and analytics, at PTC. “Acquiring such data, however, can be a challenge, particularly when the model to be simulated isn’t a fielded product yet.”

In another instance, complex issues pose real problems for the designer. “The more complex the system, the more complex the AI framework, and the more data is required if using conventional [AI] techniques,” says Betts. “Thus, training AI has typically been the principal barrier to its use.”

One Solution to the Data Problem

To overcome these challenges, one company has developed a new approach to surrogate modeling that promises to help engineers build models with significantly smaller training data sets while still achieving high predictive accuracy.

The technique developed by Front End Analytics called Physics Informed Machine Learning (PIML) incorporates physics into the AI framework, which establishes the governing shape of the surrogate model.

PIML translates inputs from the geometry space—such as data from simulations and prototype testing—into inputs for the physics space, ranking the data based on its importance to the results. This allows the engineer to train the AI system within a physics framework and create a multi-stage physics-based model via machine learning techniques.

The model provides a proxy or approximate prediction of the product’s performance. According to Front End Analytics, engineers can tweak the results using conventional AI techniques to further reduce prediction error.

One of the main features differentiating this methodology from other data-driven approaches lies in the fact that the empirical models that fit the training data set are based on the underlying physics of the engineering problem.

“These techniques have shown great promise,” says Betts. “Example cases have dramatically diminished the amount of data required to predict performance outputs while maintaining very high levels of accuracy. The predictive nature of the solution is also more robust than conventional AI, enabling us to extrapolate results beyond the range of data used for training while maintaining accuracy. The PIML models run faster than real time and are computationally simple.”

More Front End Analytics Coverage

More PTC Coverage

More Siemens Digital Industries Software Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News