AMD Unveils 7nm Datacenter GPUs

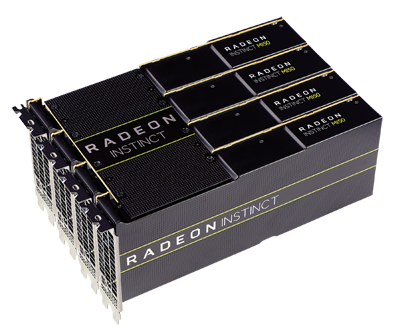

AMD Radeon Instinct MI60 and MI50 accelerators with compute performance, high-speed connectivity, fast memory bandwidth and updated ROCm open software platform power deep learning, HPC, cloud and rendering applications.

Image courtesy of AMD.

Latest News

November 7, 2018

AMD unveils the AMD Radeon Instinct MI60 and MI50 accelerators, 7nm datacenter GPUs, designed to deliver the compute performance required for next-generation deep learning, HPC, cloud computing and rendering applications. Researchers, scientists and developers can use AMD Radeon Instinct accelerators for large-scale simulations, climate change, computational biology and more.

“Legacy GPU architectures limit IT managers from effectively addressing the constantly evolving demands of processing and analyzing huge datasets for modern cloud datacenter workloads,” says David Wang, senior vice president of engineering, Radeon Technologies Group at AMD. “Combining world-class performance and a flexible architecture with a robust software platform and the industry’s leading-edge ROCm open software ecosystem, the new AMD Radeon Instinct accelerators provide the critical components needed to solve the most difficult cloud computing challenges today and into the future.”

The AMD Radeon Instinct MI60 and MI50 accelerators feature flexible mixed-precision capabilities, powered by high-performance compute units that expand the types of workloads these accelerators can address, including a range of HPC and deep learning applications. The new AMD Radeon Instinct MI60 and MI50 accelerators were designed to efficiently process workloads such as rapidly training complex neural networks, delivering higher levels of floating point performance, greater efficiencies and new features for datacenter and departmental deployments.

The AMD Radeon Instinct MI60 and MI50 accelerators provide fast floating-point performance and hyper-fast HBM2 (second-generation High-Bandwidth Memory) with up to 1 TB/s memory bandwidth speeds. They are also the first GPUs capable of supporting next-generation PCIe 4.02 interconnect, which is up to 2X faster than other x86 CPU-to-GPU interconnect technologies, and feature AMD Infinity Fabric Link GPU interconnect technology that enables GPU-to-GPU communications that are up to 6X faster than PCIe Gen 3 interconnect speeds.

AMD also announced a new version of the ROCm open software platform for accelerated computing that supports the architectural features of the new accelerators, including optimized deep learning operations (DLOPS) and the AMD Infinity Fabric Link GPU interconnect technology. Designed for scale, ROCm allows customers to deploy high-performance, energy-efficient heterogeneous computing systems in an open environment.

“Google believes that open source is good for everyone,” says Rajat Monga, engineering director, TensorFlow, Google. “We've seen how helpful it can be to open source machine learning technology, and we’re glad to see AMD embracing it. With the ROCm open software platform, TensorFlow users will benefit from GPU acceleration and a more robust open source machine learning ecosystem.”

Key features of the AMD Radeon Instinct MI60 and MI50 accelerators include:

- Optimized Deep Learning Operations: Provides flexible mixed-precision FP16, FP32 and INT4/INT8 capabilities.

- Double Precision PCIe Accelerator: The AMD Radeon Instinct MI60 is a fast PCIe 4.0 capable accelerator, delivering up to 7.4 TFLOPS peak FP64 performance, allowing ability to more efficiently process HPC applications across a range of industries including life sciences, energy, finance, automotive, aerospace, academics, government, defense and more. The AMD Radeon Instinct MI50 delivers up to 6.7 TFLOPS FP64 peak performance, while enabling high reuse in virtual desktop Infrastructure (VDI), Desktop-as-a-Service (DaaS) and cloud environments.

- Faster Data Transfer: Two Infinity Fabric Links per GPU deliver up to 200 GB/s of peer-to-peer bandwidth – up to 6X faster than PCIe 3.0 alone4 – and enable the connection of up to 4 GPUs in a hive ring configuration (2 hives in 8 GPU servers).

- Ultra-Fast HBM2 Memory: The AMD Radeon Instinct MI60 provides 32GB of HBM2 Error-correcting code (ECC) memory, and the Radeon Instinct MI50 provides 16GB of HBM2 ECC memory.

- Secure Virtualized Workload Support: AMD MxGPU Technology, a hardware-based GPU virtualization solution, which is based on the industry-standard SR-IOV (Single Root I/O Virtualization) technology.

Updated ROCm Open Software Platform

AMD announced a new version of its ROCm open software platform designed to speed development of high-performance, energy-efficient heterogeneous computing systems. In addition to support for the new Radeon Instinct accelerators, ROCm software version 2.0 provides updated math libraries for the new DLOPS; support for 64-bit Linux operating systems including CentOS, RHEL and Ubuntu; optimizations of existing components; and support for the latest versions of the most popular deep learning frameworks, including TensorFlow 1.11, PyTorch (Caffe2) and others.

Availability

The AMD Radeon Instinct MI60 accelerator is expected to ship to datacenter customers by the end of 2018. The AMD Radeon Instinct MI50 accelerator is expected to begin shipping to data center customers by the end of Q1 2019. The ROCm 2.0 open software platform is expected to be available by the end of 2018.

Sources: Press materials received from the company.

More AMD Coverage

For More Info

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

DE’s editors contribute news and new product announcements to Digital Engineering.

Press releases may be sent to them via [email protected].